Most AI-assisted PR review setups I’ve seen stop too early. They generate comments, drop them on the PR, and that’s it. No follow-up. No verification. No learning.

That’s not really a loop. It’s a one-way pipe.

I spent some time this week wiring Windsurf and the GitHub CLI together to see if I could close that loop properly. These are the notes.

Table of contents

- What “closing the loop” actually means

- The setup

- The workflow

- Where Windsurf fits

- Where the GitHub CLI fits

- Things that surprised me

- Tradeoffs

What “closing the loop” actually means

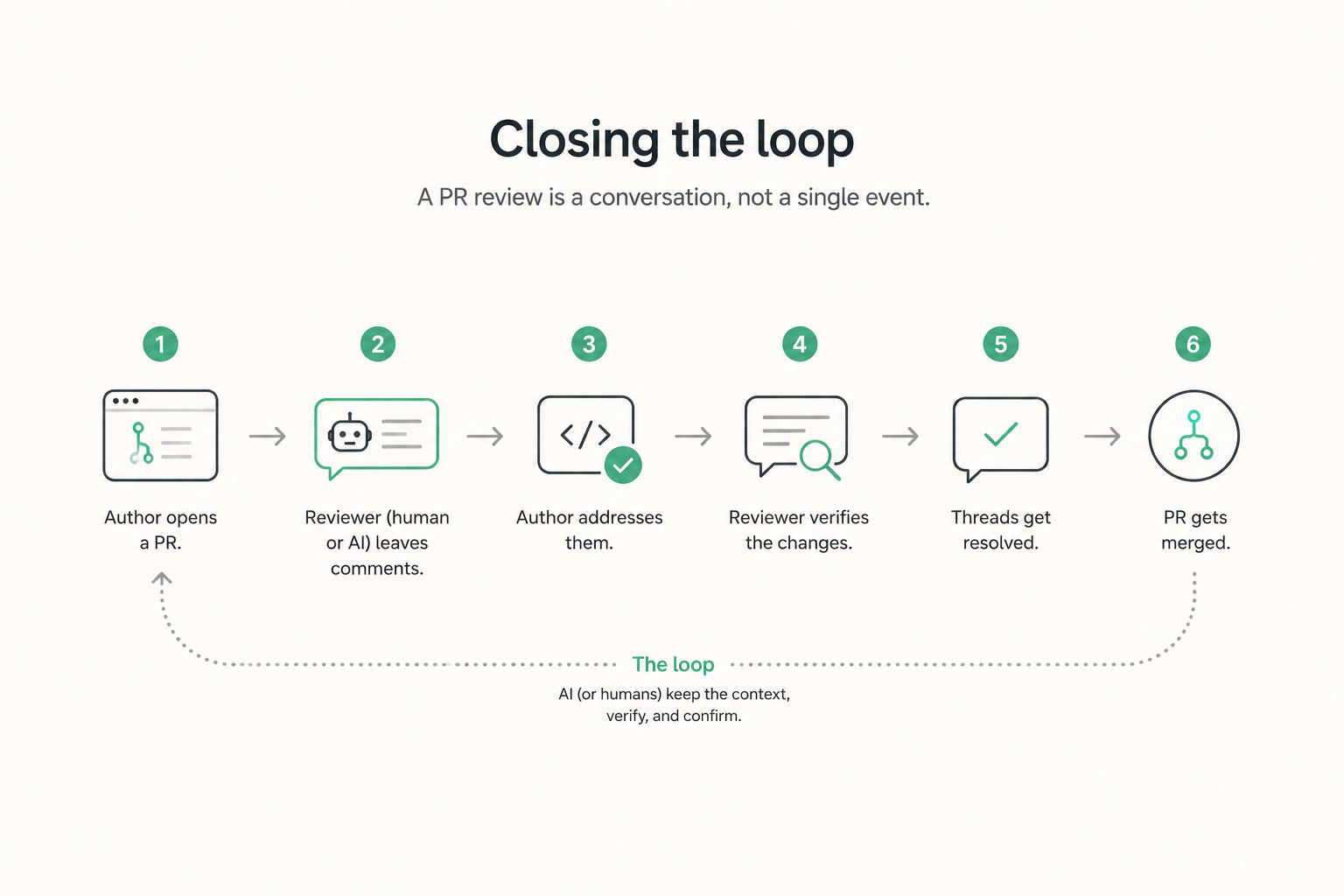

A PR review is not a single event. It’s a small conversation with a few moving parts:

- Author opens a PR.

- Reviewer (human or AI) leaves comments.

- Author addresses them.

- Reviewer verifies the changes.

- Threads get resolved.

- PR gets merged.

Most AI tooling jumps in at step 2 and disappears. The interesting part, the part that affects code quality, is steps 3 to 5.

If the AI never reads the response to its own comments, it’s not reviewing. It’s just commenting.

The setup

Nothing exotic here. The pieces are:

- Windsurf as the AI agent with access to the repo.

- GitHub CLI (

gh) as the bridge between Windsurf and the PR data. - A small set of prompts and conventions to keep the agent honest.

The key insight: gh already exposes everything you need. PRs, review threads, comments, diffs, statuses. You don’t need a custom GitHub App for this. You need a terminal and a clear workflow.

gh pr view 1234 --json title,body,reviews,comments

gh pr diff 1234

gh api repos/:owner/:repo/pulls/1234/comments

That last one is the one most people miss. It returns inline review comments, which is where the real conversation happens.

The workflow

Here’s the loop I ended up with.

1. Pull the PR context

Windsurf runs gh pr view and gh pr diff to load the full picture: description, files changed, existing reviews, unresolved threads.

2. Generate a structured review

Instead of free-form comments, the agent produces a list of findings with:

- file and line

- severity (blocker, suggestion, nit)

- rationale

- suggested fix when possible

This structure matters later, when we need to track which findings were addressed.

3. Post the review

Using gh pr review and gh api, the comments land on the PR as inline reviews, not as a wall of text in a single comment.

4. Wait for the author’s response

This is the part that’s usually missing. The agent doesn’t just walk away.

5. Re-read the PR

After the author pushes new commits or replies to threads, Windsurf pulls the PR again and compares:

- which findings were addressed in the new diff

- which threads have a reply but no code change

- which threads were resolved without a clear fix

6. Post a follow-up

The agent then posts a short follow-up: confirming what was fixed, flagging what still looks unresolved, and resolving the threads it’s confident about.

Where Windsurf fits

Windsurf is doing three things in this setup:

- Reading: parsing the diff, the comments, the replies.

- Reasoning: deciding whether a finding is addressed, partially addressed, or ignored.

- Writing: composing the follow-up review.

What it’s not doing: deciding to merge, approving on its own, or silently rewriting code. Those stay with humans.

The agent has a clear scope. That’s part of why it works.

Where the GitHub CLI fits

gh is the boring, reliable part of the system. And honestly, that’s why it works so well.

A few commands carry most of the weight:

# Read PR metadata and threads

gh pr view <num> --json reviews,comments,commits

# Read inline review comments (the conversation)

gh api repos/{owner}/{repo}/pulls/<num>/comments

# Reply to a specific thread

gh api -X POST \

repos/{owner}/{repo}/pulls/<num>/comments/<id>/replies \

-f body="..."

# Resolve a thread (GraphQL)

gh api graphql -f query='mutation { resolveReviewThread(...) }'

No SDK. No webhook server. No new infrastructure. Just CLI calls the agent can run.

Things that surprised me

A few notes from actually using this:

- Most “AI review noise” comes from not re-reading the PR. Once the agent verifies its own comments against the new diff, it stops repeating itself.

- Resolving threads is underrated. When the agent confidently resolves what’s been addressed, the PR becomes much easier for humans to scan.

- Severity labels change behavior. When the agent has to commit to “blocker” vs “nit”, it becomes more careful. Vague comments mostly disappear.

- The loop is more valuable than the initial review. The first pass is fine. The follow-up is where the agent earns its place.

Tradeoffs

This isn’t free, and a few things are worth being honest about:

- Latency. Two passes per PR means the agent is doing more work. For small PRs it’s fine. For large ones you feel it.

- Trust calibration. Auto-resolving threads only works if the agent is right most of the time. Start conservative. Let humans resolve, and have the agent only suggest resolution.

- Prompt drift. As you tweak prompts, the agent’s tone and severity thresholds shift. Pin them. Review them like code.

- Repo-specific context. Generic review agents miss the conventions that matter. Feeding the agent your architecture notes and review checklist makes a real difference.

Closing thought

The interesting part of AI-assisted PR review isn’t the comments. It’s whether the agent actually engages with what happens after.

A reviewer that never reads the response isn’t a reviewer. Closing that loop, even with something as simple as Windsurf plus gh, changes the whole feel of the workflow.

Still iterating on it. But this is the first version that actually feels useful.