PR reviews are one of those things that look simple from the outside, but quickly turn messy when a review has 20+ comments, mixed severity, and a couple of them are actually requesting architectural changes hidden between nitpicks.

Today I spent some time wiring up the Jira and GitHub MCP servers to build a small workflow around that exact problem.

These are my notes.

Table of contents

- What I wanted to solve

- Why MCP and not just a script

- The setup

- The workflow

- How the classification works

- What the output looks like

- Tradeoffs and limitations

- Where I want to take this next

What I wanted to solve

The trigger was simple. A PR with a long review thread, comments from two reviewers, some of them contradicting each other, and a linked Jira ticket with extra context that nobody was reading.

I wanted something that could:

- pull all active comments from a PR

- pull the linked Jira ticket and its acceptance criteria

- classify each comment by type and severity

- propose a plan to address them

- flag the ones that contradict the ticket scope

Not to replace my judgment. Just to remove the boring part of reading, sorting and grouping.

Why MCP and not just a script

I could have written a script with the GitHub and Jira APIs. I’ve done that before. The problem is that the script becomes another thing to maintain, and the moment you want to ask follow-up questions (“what about only the comments from the senior reviewer?”) you’re stuck.

MCP changes the shape of this.

Instead of a rigid script, the LLM gets tools to query GitHub and Jira directly, and I get a conversation around the data. The workflow becomes:

- structured where it needs to be

- flexible where rigid scripts hurt

- inspectable, because every tool call is visible

MCP is not magic. It’s just a clean way to give an LLM controlled access to your tools.

The setup

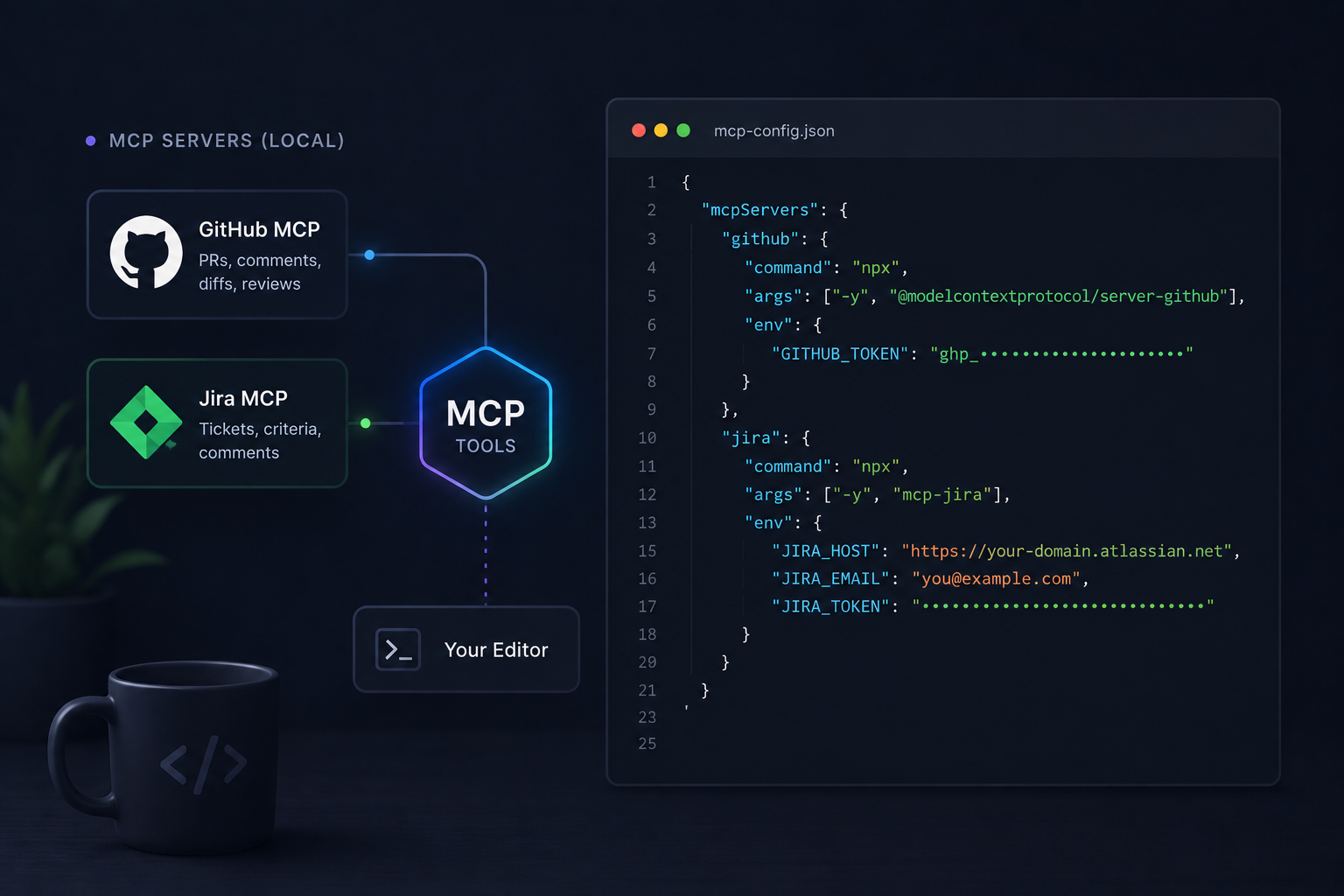

The two pieces I configured:

- GitHub MCP server — for reading PRs, comments, diffs and review threads.

- Jira MCP server — for reading the linked ticket, its description, acceptance criteria and comments.

Both run locally and authenticate with personal tokens. Nothing fancy.

A minimal config looks roughly like this:

{

"mcpServers": {

"github": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-github"],

"env": { "GITHUB_TOKEN": "..." }

},

"jira": {

"command": "npx",

"args": ["-y", "mcp-jira"],

"env": {

"JIRA_HOST": "...",

"JIRA_EMAIL": "...",

"JIRA_TOKEN": "..."

}

}

}

}

The exact server packages may differ depending on which implementation you pick. The important part is that both expose tools the model can call.

The workflow

The workflow itself is just a prompt with a clear structure. Something like:

- Given PR

#1234, fetch all unresolved review comments. - Fetch the linked Jira ticket and its acceptance criteria.

- For each comment:

- classify type: bug, style, architecture, question, nitpick

- classify severity: blocker, important, minor

- check if it falls inside or outside the ticket scope

- Group related comments.

- Propose a plan ordered by severity and dependency.

- Flag contradictions between comments or between comments and the ticket.

That’s the whole thing. No agent framework, no orchestration layer. Just MCP tools and a prompt that the model executes step by step.

How the classification works

The interesting part is not the categories themselves, but how they connect.

A “style” comment is cheap to fix. A “scope” comment is a conversation with the product owner. An “architecture” comment may invalidate half the PR. Mixing them in the same review thread is what makes reviews exhausting.

The classification is rough on purpose:

- Type — what kind of change is being requested

- Severity — how much it blocks merging

- Scope — whether it belongs to the current ticket

- Effort — quick estimate, just to order the plan

The model is reasonable at this when it has the ticket context. Without the ticket, it tends to treat every comment as in-scope, which is exactly the problem I wanted to avoid.

This is related to something I’ve mentioned before about complexity added too early — review comments often pull a PR in that direction without anyone noticing.

What the output looks like

A typical result looks like a small structured plan:

PLAN FOR PR #1234

Blockers (in scope)

- Fix race condition in loadUser() — comment by @reviewer1

- Handle empty state in list — comment by @reviewer2

Important (in scope)

- Extract form validation into a service

- Replace inline styles with tokens

Out of scope (suggest follow-up ticket)

- Refactor auth module

- Add e2e tests for the settings page

Contradictions

- @reviewer1 asks to inline the helper

- @reviewer2 asks to extract it

→ needs alignment before acting

This is the part that saves real time. Not the AI writing code. The AI giving me a readable map of the review so I can decide where to spend energy.

Tradeoffs and limitations

A few honest observations after using it for a day:

- The model is not great at understanding why a comment was written. It needs the ticket and sometimes the diff to make sense of intent.

- Long review threads with replies confuse it. I usually ask it to focus only on the latest state of each thread.

- Severity classification is subjective. I treat it as a suggestion, not a verdict.

- It still hallucinates file paths occasionally when summarizing diffs. Always verify before acting.

- Token cost grows fast on big PRs. Smaller, focused PRs work much better here — which is a good habit anyway.

The workflow is useful because it changes how I approach a review, not because it produces a perfect plan.

Where I want to take this next

A few directions I want to explore:

- A second pass that proposes concrete code changes for the “quick fix” category

- Automatic creation of follow-up Jira tickets for out-of-scope comments

- A feedback loop where I mark which classifications were wrong, and the prompt evolves

- Integration with the commit message so the PR description stays aligned with the plan

The bigger idea behind all of this is something I keep coming back to: AI is most useful when it removes the tedious layer between thinking and acting. Reading 25 review comments and sorting them in your head is exactly that layer.

MCP just happens to be a clean way to plug it in.